Demo Videos with Sparse Input Views

4DGaussians RGB (CVPR'24)

GC-4DGS RGB (Ours)

4DGaussians Depth (CVPR'24)

GC-4DGS Depth (Ours)

4DGS RGB (ICLR'24)

GC-4DGS RGB (Ours)

4DGS Depth (ICLR'24)

GC-4DGS Depth (Ours)

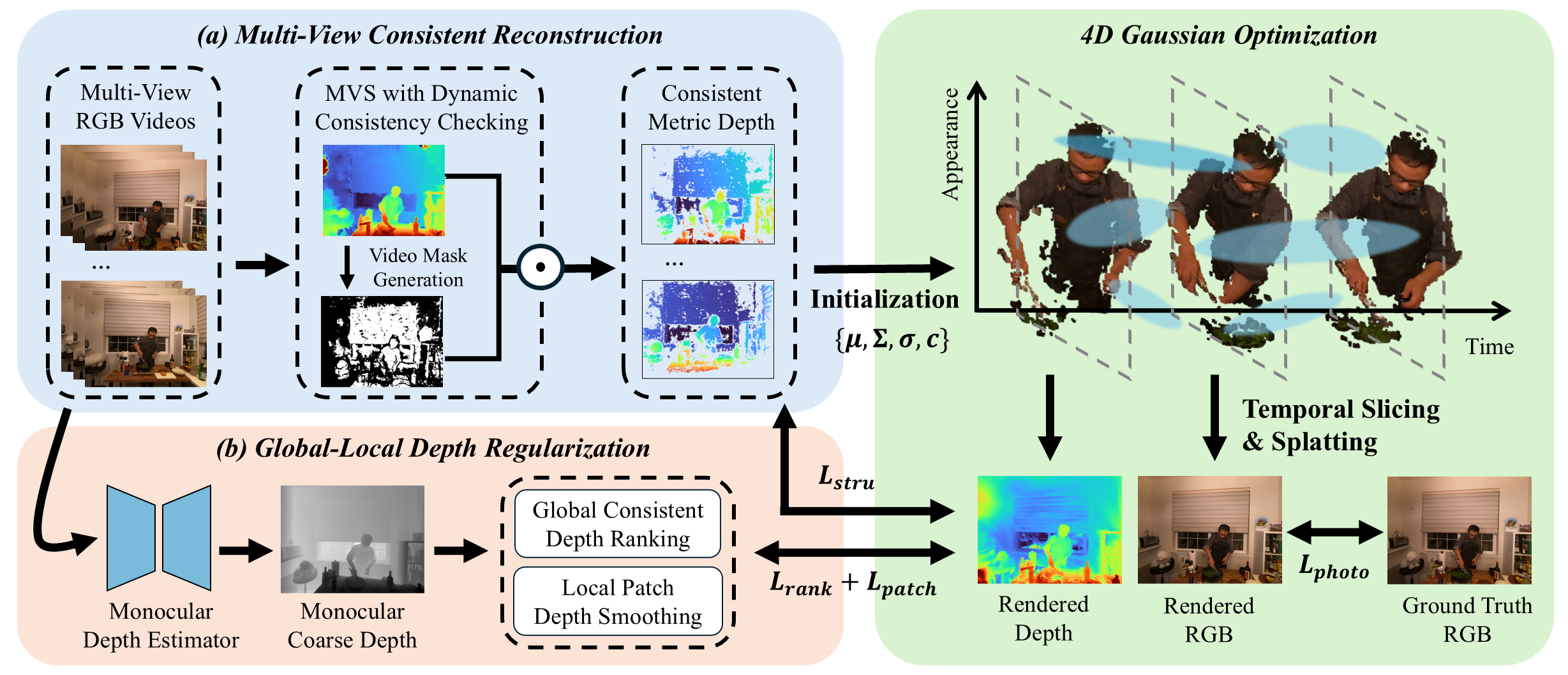

Method Overview

Results

Comparisons with 3 input views

For N3DV Dataset, HyperReel [2] and K-planes [3] fails to render the scene with few input views. Although Gaussian Splatting- based approaches improve rendering qualities, they struggle to capture fine-grained dynamics (e.g., the dog’s hair) and under-observed geometry (e.g., the mini statue). In contrast, GC-4DGS recovers more textural and structural details in both central dynamic areas and under-observed static regions. For Technicolor Dataset, HyperReel [2] and 4DGaussians [1] produce significantly distorted images, while STG [4] struggles to capture complex dynamics. 4DGS [5] and E-D3DGS [6] are prone to overfit in areas with limited observations and produce blurred results.Ablation Studies

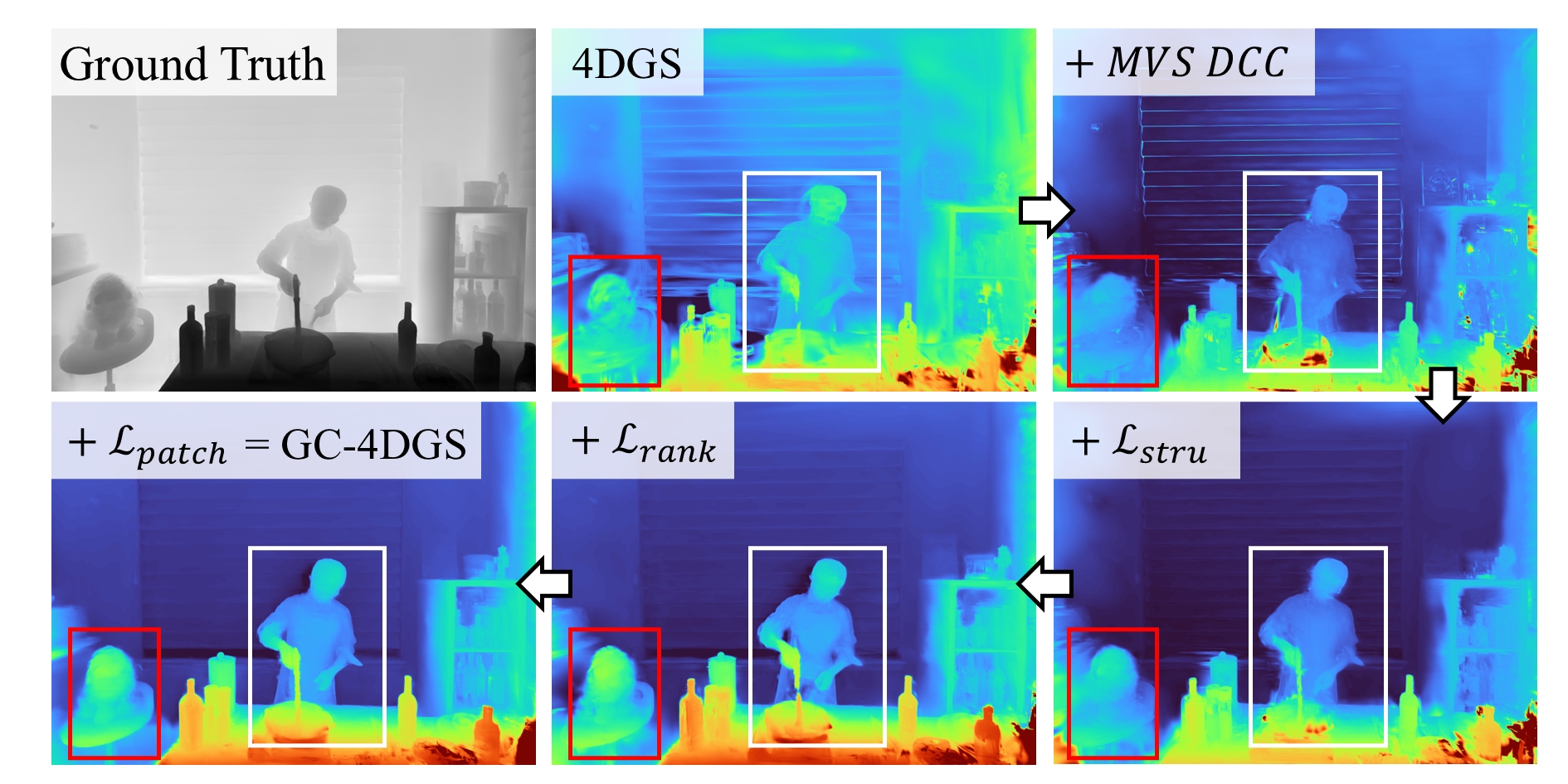

MVS contributes to the learning of fine-grained 4D geometry with dynamic consistency checking (DCC). Furthermore, the proposed depth regularization components provide complementary benefits that enhance geometric consistency.

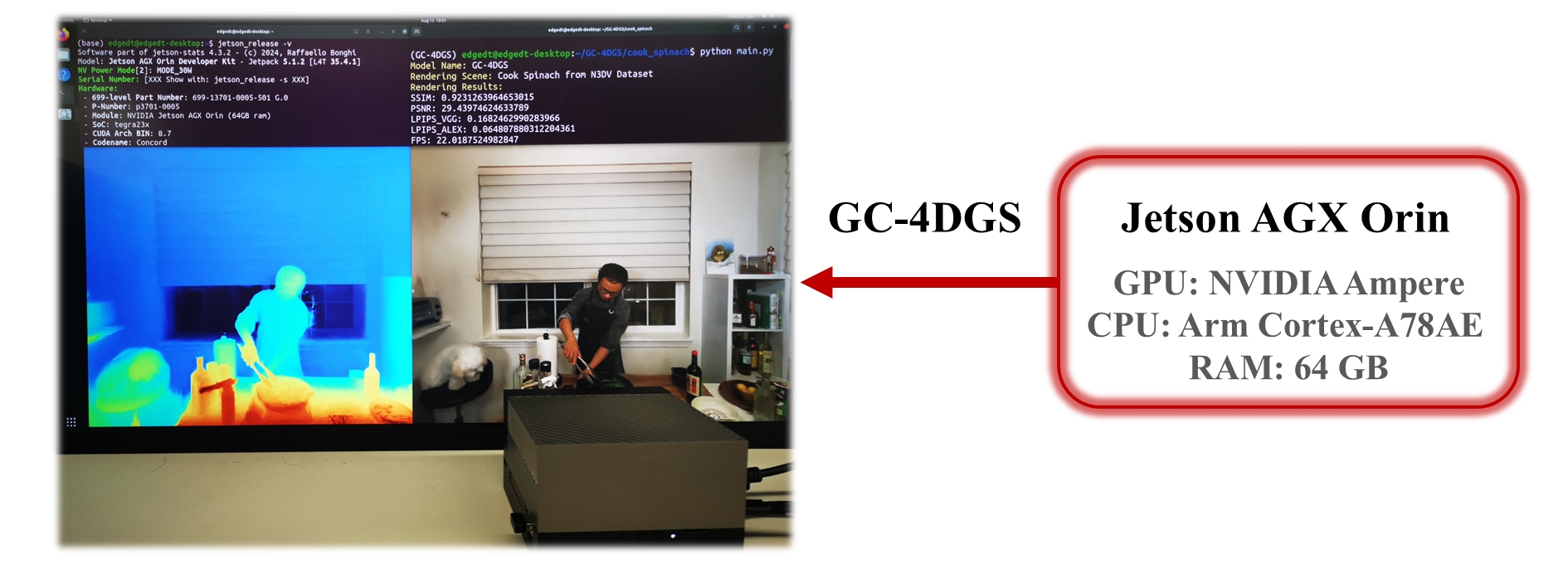

Deployability on Edge Devices

To further evaluate the deployability of GC-4DGS on resource-constrained edge devices within IoT systems, experiments are conducted on the Jetson AGX Orin Developer Kit. Our model was first trained in the cloud before being deployed on edge devices for testing. The results demonstrate that GC-4DGS is easily deployable on IoT edge devices with limited computing resource consumption, highlighting the potential of our model for AIoT applications.

References

[1] G. Wu, T. Yi, J. Fang, L. Xie, X. Zhang, W. Wei, W. Liu, Q. Tian, and X. Wang, “4d gaussian splatting for real-time dynamic scene rendering,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 20 310–20 320.

[2] B. Attal, J.-B. Huang, C. Richardt, M. Zollhoefer, J. Kopf, M. O’Toole, and C. Kim, “Hyperreel: High-fidelity 6-dof video with ray-conditioned sampling,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 16 610–16 620.

[3] S. Fridovich-Keil, G. Meanti, F. R. Warburg, B. Recht, and A. Kanazawa, “K-planes: Explicit radiance fields in space, time, and appearance,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 12 479–12 488.

[4] Z. Li, Z. Chen, Z. Li, and Y. Xu, “Spacetime gaussian feature splatting for real-time dynamic view synthesis,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp.8508–8520.

[5] Z. Yang, H. Yang, Z. Pan, and L. Zhang, “Real-time photorealistic dynamic scene representation and rendering with 4d gaussian splatting,” in ICLR, 2024.

[6] J. Bae, S. Kim, Y. Yun, H. Lee, G. Bang, and Y. Uh, “Per-gaussianembedding-based deformation for deformable 3d gaussian splatting,” in European Conference on Computer Vision. Springer, 2025, pp. 321–335.

[2] B. Attal, J.-B. Huang, C. Richardt, M. Zollhoefer, J. Kopf, M. O’Toole, and C. Kim, “Hyperreel: High-fidelity 6-dof video with ray-conditioned sampling,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 16 610–16 620.

[3] S. Fridovich-Keil, G. Meanti, F. R. Warburg, B. Recht, and A. Kanazawa, “K-planes: Explicit radiance fields in space, time, and appearance,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 12 479–12 488.

[4] Z. Li, Z. Chen, Z. Li, and Y. Xu, “Spacetime gaussian feature splatting for real-time dynamic view synthesis,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp.8508–8520.

[5] Z. Yang, H. Yang, Z. Pan, and L. Zhang, “Real-time photorealistic dynamic scene representation and rendering with 4d gaussian splatting,” in ICLR, 2024.

[6] J. Bae, S. Kim, Y. Yun, H. Lee, G. Bang, and Y. Uh, “Per-gaussianembedding-based deformation for deformable 3d gaussian splatting,” in European Conference on Computer Vision. Springer, 2025, pp. 321–335.